At First, let me explain what is a “SEO website Audit”. A Site Audit its a 30 to a 50 page report where it gives you a detailed snapshot in time of how your site is performing in the search engines. Currently we provide these SEO Audits on the final client meeting where we spend good amount of time going over with the stakeholders, where we make sure the client understands it and knows what kinda actions need to be taken and why. Below is a brief Audit that include important aspect of the site visibility and structure

Check the # of the Indexed Pages

Use the “site: ” command in Google and in Bing

Examples:

- http://www.google.com/search?q=site%3Awww.lebseodesign.com

- http://www.bing.com/search?q=site%3Awww.lebseodesign.com

Make note of the data.

What does this data mean?

These metrics indicate that your domain if it is being properly indexed by Google and Bing. This is important information to know because the indexation the major search engines strongly correlates to the amount of traffic the search engines send to your website.

Solutions:

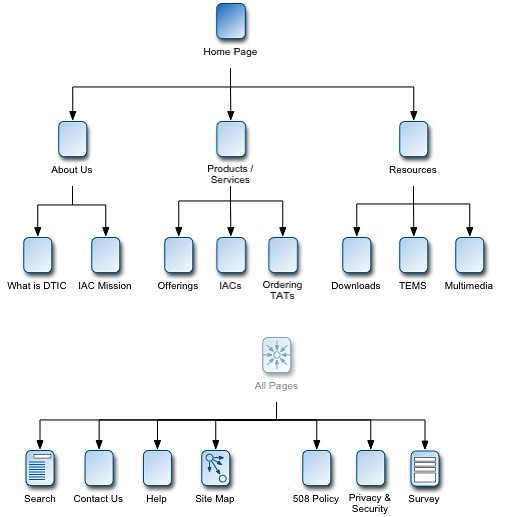

- Create a Sitemap.xml and a HTML Sitemap and Submit it to Google/Bing Webmaster tools

- Internal Linking

Look For Duplicate Content

To find if your site is experience a duplicate content issues, these queries might help you identify URLs where your content is duplicated.

- Phrase test: So for example, if a site contains a phrase such as “Beirut is the capital and largest city of Lebanon” copy it and search on Google (including the quotes). What will happen now is Google will find all the pages that includes exactly this phrase and skim threw your results and find potential copied content.

- AllIntitle Command: AllIntitle is a Google advanced search operator where Google will restrict the results to those with all of the query words in the title. One example you can use, is use homepage title tag and insert it in Google. For Example = allintitle:”this is my title tag”. skim threw the results and see if you are serving Google duplicated content from your site.

- For ECommerce sites, please check each product page ie. a blue and yellow widget. the widget product page it can be listed under the yellow category(domain.com/category/yellow/product.html) and also it can be in the Blue category ( domain.com/category/blue/product.html) <– big Duplicate content flag

Solutions:

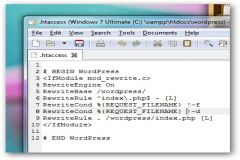

- Use 301 redirect: Use 301 in your .htaccess file to smartly redirect all search crawler to the canonicalized version of your page

- Use Canonical: by adding a <link rel=”canonical” href=”http://www.yourdomain.com/page.html” /> in the HEAD section of your site.

Do a Quick Redirect Check

Use a tool such as Web Sniffer. For example, go to http://yourdomain.com and see if it redirects to http://www.example.com. Then check Web Sniffer to make sure the redirect used was a 301 instead of a meta refresh or a 302 redirection. Here is a very informative video about site canonicalization by Matt Cutts.

Check the XML Sitemap File

Sitemaps are used by the search engines to learn both the location of the webpages on a domain. Adding an XML sitemap to your website will likely create a short term boost in the number of pages indexed by the engine.

Review the Robots.txt File

Some webmaster and developers run into problems where they accidentally restrict important sections of the content from Search Engine Crawlers.

Go to Google Webmaster Tools the go to the “Crawler Access” Section Under Site Configuration and use the built-in robots.txt checker. This will show you pages that Google thinks are blocked off by the robots.txt file.

—–

While some ECommerce sites has some positive attributes from the UX (User Experience) point of view, it may fall in some deep SEO pitfalls. This quick audit with help you find important portion of sites major problems.